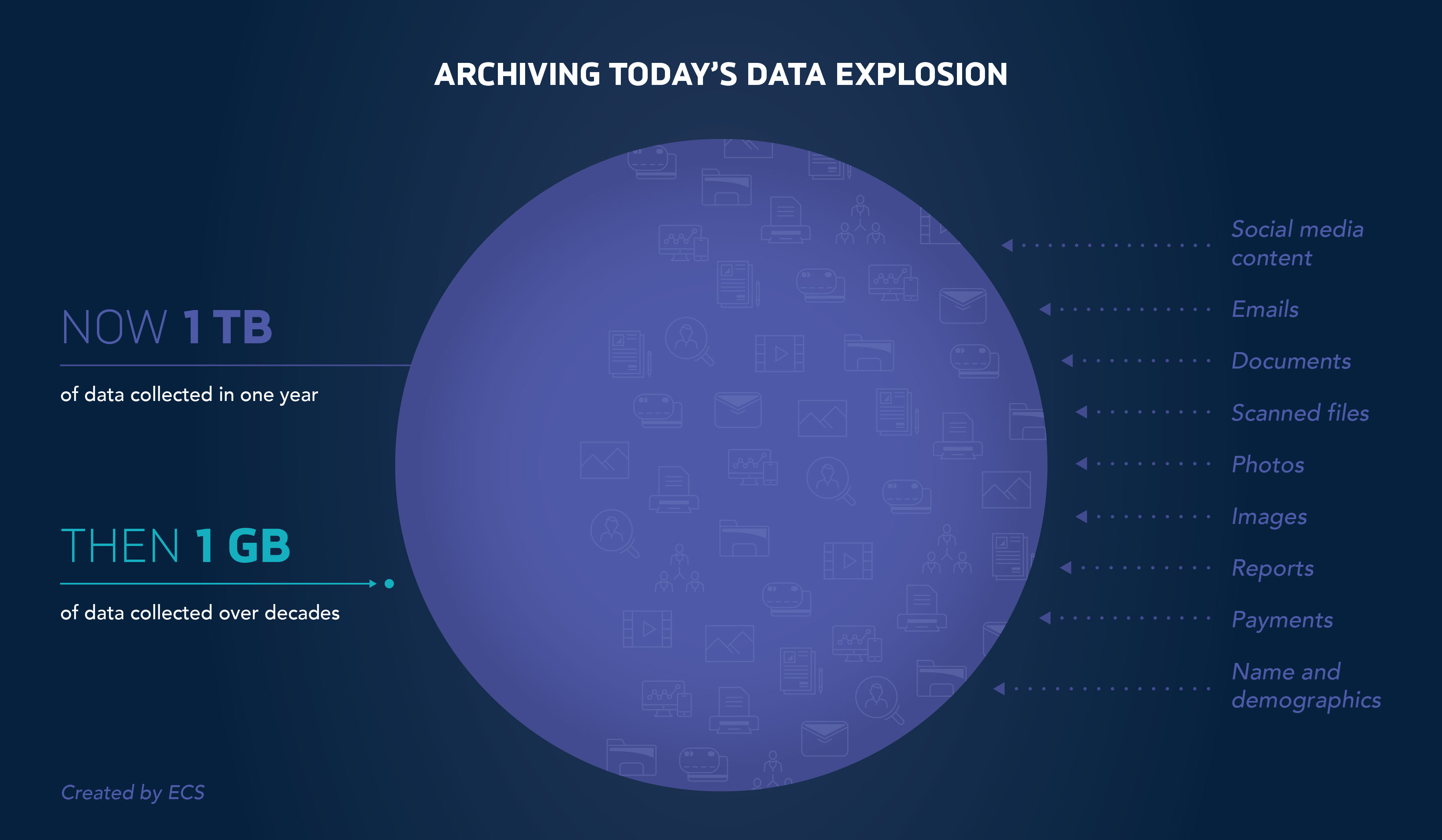

The amount and types of data being ingested and stored are staggering. It used to take decades to produce petabytes of data; now, it takes far less and often about a year. This is projected to double over the next year, and double again over the following year. How will all this data be ingested, and where will it be stored? The answer is in cloud. For organizations storing, managing, and sharing large amounts of data—records, documents, materials—cloud makes it possible to collect and archive this information efficiently and cost effectively, addressing myriad data storage challenges right now.

What is all this data?

Data comes from a wide variety of sources, from individuals to global organizations. It is generated, shared, ingested, and stored. Based on just one of our clients, the National Archives and Records Administration (NARA), we see structured datasets that may take the form of CSV files, spreadsheets, or database reports. The information includes:

- names and demographics

- payments

- information from third parties

We also see unstructured datasets that may take the form of PDFs, Microsoft Word files or other documents. The information includes:

- social media content

- emails

- official documents

- scanned files

- photos, screenshots, or other images

- reports

These organizations take in not just their own data, but other organizations’ data, making for massive curation and storage needs.

For NARA alone, there are several miles of paper archives that must be processed, ingested, and stored. Each year, this backlog gets bigger. During the peak times, NARA ingested between one and ten TB a day that includes the software system logs used in processing.

How do you select the best cloud solution for your organization?

A lot goes into selecting a cloud solution, but it starts with asking these questions first:

- Is the project one-time or ongoing?

- Is the project time critical?

- Will the project require high bandwidth?

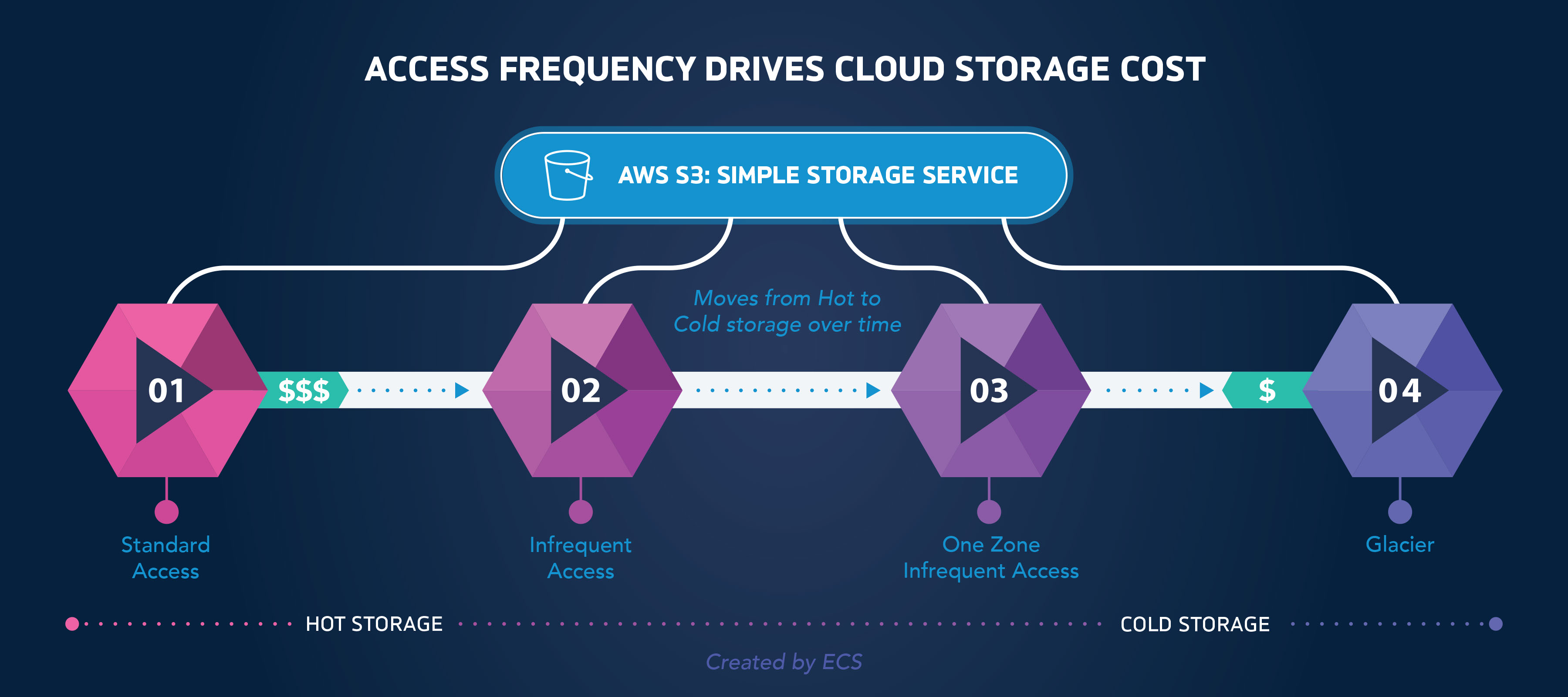

When addressing these three questions, it is important to understand how storage cost structure works. Cloud providers offer hot and cold storage based on access frequency, with typical cold storage costing one-tenth of hot storage. In AWS, Simple Storage Service (S3) offers a continuum of storage classes from Standard Access (hot) to Infrequent Access, One Zone-Infrequent Access, and Glacier (cold). Using AWS lifecycle policies, documents may be automatically moved from across storage classes based on organization retention policies. This helps organizations budget because they pay for what they use according to a schedule. Storage classes can include data that is either frequently, occasionally, or rarely used. For example, with NARA, application logs are stored in S3 Standard Access initially and moved to S3 Infrequent Access, 30 days after the initial ingestion. Then, after 90 days, it is moved to the AWS “Glacier” class, made up of data that is rarely read. Federal regulations and compliance requirements may mandate some types of storage to be permanent.

Access Frequency Drives Cloud Storage Cost

Real-time data collection and storage may require transient storage, high bandwidth and time-sensitive analytics before sending to permanent long-term storage. Cloud service providers (CSPs) offer a variety of services to capture high-velocity data (like click-through), hold it in huge data buffers, run analytics instantly, and support real-time decision making. Cloud offers a competitive advantage to organizations with real-time decision support tools compared to the legacy process of generating reports several days or weeks later.

Data sensitivity and security can be addressed with encryption both in transit and at rest. Cloud offers end-to-end encryption with both native and third-party integrated solutions.

If the project is not time sensitive and does not require high bandwidth, a less expensive cloud solution could be the answer. In all cases, data encryption is recommended as the best practice.

We’ll continue this conversation in Part II when we’ll discuss monetary cost benefits, as well as benefits that go beyond the budget.